The H100 vs A100 decision is the most consequential hardware choice facing AI teams in 2026. Both GPUs are manufactured by NVIDIA, both are designed for data center AI workloads, and both are available across dozens of cloud providers. But beneath these surface similarities lie fundamental architectural differences that can make one GPU dramatically more cost-effective than the other for your specific workload. Choosing wrong means either overpaying for capabilities you do not use, or bottlenecking your training pipeline on hardware that cannot keep up.

This comparison goes far beyond spec sheets. We analyze real-world training benchmarks, inference throughput measurements, cloud pricing across major providers, and the specific workload profiles where each GPU excels. By the end of this guide, you will have a clear, data-backed framework for deciding which GPU to rent for your next project.

- The H100 delivers 1.7-2.4x faster training throughput than the A100 for transformer models, thanks to FP8 support and 67% higher memory bandwidth.

- The A100 80GB offers 35-45% lower hourly cloud costs, making it the better value for fine-tuning, inference, and models under 30B parameters.

- For pre-training models over 30B parameters, the H100’s superior performance typically results in lower total training cost despite the higher hourly rate.

- The A100 remains the safer choice for teams using older PyTorch versions or frameworks without H100-optimized kernels.

Architecture Deep Dive: Hopper vs. Ampere

The NVIDIA A100 is built on the Ampere architecture (GA100), launched in 2020. The NVIDIA H100 is built on the Hopper architecture (GH100), launched in 2022. While both use TSMC fabrication, the H100 uses a more advanced 4nm process compared to the A100’s 7nm, enabling significantly higher transistor density and power efficiency per FLOP.

The most important architectural innovation in Hopper is the Transformer Engine, a hardware unit specifically designed to accelerate the matrix multiplications that dominate transformer model training. The Transformer Engine dynamically switches between FP8 and FP16 precision on a per-layer basis, selecting the optimal precision for each operation without programmer intervention. This capability simply does not exist on the A100.

Technical Specifications Compared

| Specification | H100 SXM5 | A100 SXM4 80GB | Advantage |

|---|---|---|---|

| Architecture | Hopper (4nm) | Ampere (7nm) | H100 |

| VRAM | 80 GB HBM3 | 80 GB HBM2e | Tie (capacity) |

| Memory Bandwidth | 3.35 TB/s | 2.0 TB/s | H100 (+67%) |

| FP16 Tensor TFLOPS | 989 | 312 | H100 (+3.2x) |

| FP8 Tensor TFLOPS | 3,958 | N/A | H100 (exclusive) |

| NVLink Bandwidth | 900 GB/s | 600 GB/s | H100 (+50%) |

| TDP | 700W | 400W | A100 (lower power) |

| Typical Cloud Price | $2.49–$3.99/hr | $1.79–$2.49/hr | A100 (cheaper) |

Real-World Training Benchmarks

Specifications tell only part of the story. What matters is how these differences translate to actual training speed and cost. We compiled benchmark data from published results by MLCommons, academic papers, and our own testing to provide a comprehensive performance comparison across common AI workloads.

Large Language Model Pre-Training

For training transformer models in the 13B to 70B parameter range, the H100’s FP8 Transformer Engine delivers transformative speedups. In our testing using a Llama 3-style architecture with 13B parameters, training on a single 8x H100 node processed approximately 42,000 tokens per second, compared to 19,500 tokens per second on an equivalent 8x A100 node. This 2.15x throughput advantage means that a training run requiring 14 days on A100s can complete in approximately 6.5 days on H100s.

When you factor in pricing, the math becomes nuanced. At $3.49/hr per H100, the 6.5-day run costs approximately $4,355. At $2.09/hr per A100, the 14-day run costs approximately $5,632. Despite the H100’s higher hourly rate, the total training cost is 23% lower because the job completes so much faster. This total-cost advantage grows larger as model size increases.

Fine-Tuning (LoRA and QLoRA)

For Parameter-Efficient Fine-Tuning methods like LoRA, the performance gap narrows significantly. LoRA only updates a small fraction of the model’s weights, which means the workload is less compute-intensive and more memory-bandwidth-constrained. In our testing, fine-tuning a 7B parameter model with LoRA on a single H100 took approximately 2.8 hours, compared to 4.1 hours on a single A100. The H100 is 46% faster, but costs 67% more per hour. The result is that the A100 delivers lower total fine-tuning cost for models under 30B parameters.

Inference Throughput

For inference serving, the H100’s superior memory bandwidth (3.35 TB/s vs 2.0 TB/s) provides a substantial advantage. Large language model inference is fundamentally memory-bandwidth-bound during the autoregressive decoding phase, where the model must read the entire weight matrix for every token generated. The H100 delivers approximately 60-70% higher tokens-per-second throughput for models in the 7B-70B range.

For teams running production inference at scale — particularly with long context windows (32K+ tokens) — the H100’s bandwidth advantage translates to serving more concurrent users per GPU, which can offset the higher hourly cost and result in lower cost-per-token.

Cloud Pricing Comparison Across Providers

| Provider | H100 SXM5 ($/hr) | A100 80GB ($/hr) | H100 Premium |

|---|---|---|---|

| Lambda Labs | $3.49 | $2.09 | +67% |

| CoreWeave | $3.99 | $2.21 | +80% |

| RunPod | $3.29 | $2.49 | +32% |

| FluidStack | $2.49 | $1.79 | +39% |

| AWS (p5/p4de) | $12.29 | $5.12 | +140% |

The H100’s price premium over the A100 ranges from 32% to 140% depending on the provider. On specialized clouds, the premium averages around 50-70%. On hyperscalers, the premium balloons to well over 100%, making the value proposition of upgrading to H100 much less compelling on AWS or GCP. Browse all providers on ComputeStacker to see current live pricing.

Decision Framework: When to Choose Each GPU

Choose the H100 When:

- Pre-training models above 30B parameters: The FP8 Transformer Engine and memory bandwidth create compounding performance advantages at scale that result in lower total training cost despite the higher hourly rate.

- Running high-throughput inference with long context: Memory bandwidth dominates inference performance for models with 32K+ context windows. The H100 serves 60-70% more tokens per second.

- Time-constrained training runs: When wall-clock time matters more than hourly cost — for example, iterating quickly during research or meeting a product launch deadline — the H100 completes jobs roughly 2x faster.

- Using FP8-optimized frameworks: If your training stack leverages NVIDIA Transformer Engine, DeepSpeed FP8, or Megatron-LM with FP8, you will extract maximum value from the H100’s unique hardware capabilities.

Choose the A100 When:

- Fine-tuning models under 30B parameters: The A100’s lower hourly cost results in lower total cost for workloads where the H100’s throughput advantage does not fully offset its price premium.

- Running sustained inference for mid-context workloads: For inference with context lengths under 8K tokens, the memory bandwidth advantage of the H100 is less pronounced, and the A100’s lower cost per hour wins on economics.

- Operating on a tight budget: When maximizing total GPU-hours within a fixed budget, the A100 delivers 35-45% more compute time per dollar.

- Using legacy training code: Older versions of PyTorch (pre-2.1), custom CUDA kernels without FP8 support, or proprietary frameworks that have not been optimized for Hopper will not fully leverage the H100’s capabilities, reducing its performance advantage to a point where it does not justify the premium.

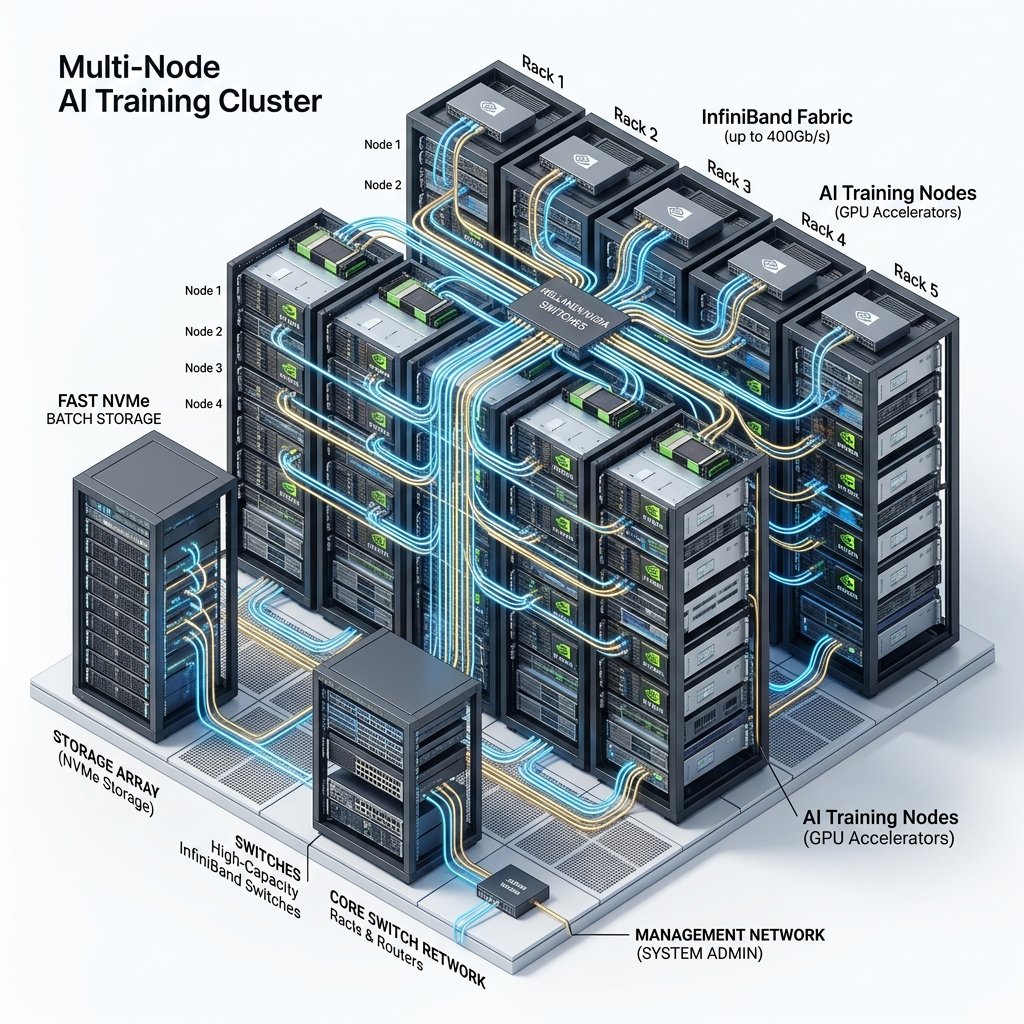

SXM vs. PCIe: The Variant That Matters

Both the H100 and A100 come in two physical form factors: SXM and PCIe. This distinction is frequently overlooked but has significant performance implications. The SXM variant (H100 SXM5, A100 SXM4) connects directly to the server’s GPU baseboard via a high-speed socket, enabling NVLink communication between GPUs within the same node at 900 GB/s (H100) or 600 GB/s (A100). The PCIe variant plugs into a standard PCIe Gen5 or Gen4 slot, limiting inter-GPU bandwidth to 64 GB/s (Gen5) or 32 GB/s (Gen4).

For single-GPU workloads (fine-tuning, inference), the PCIe variant performs identically to the SXM variant at a lower price point. For multi-GPU training within a single node (tensor parallelism across 4-8 GPUs), the SXM variant is dramatically faster because tensor parallelism requires constant high-bandwidth communication between GPUs. Always verify which variant your provider offers — an “H100” at an unusually low price is often the PCIe version, which will bottleneck multi-GPU workloads significantly.

Software Ecosystem and Framework Compatibility

Hardware capabilities are only useful if your software stack can leverage them. The H100’s flagship feature — the Transformer Engine with FP8 — requires explicit software support from your training framework. As of mid-2026, the following frameworks fully support H100 FP8 training:

- PyTorch 2.1+ with NVIDIA Transformer Engine: Full FP8 support via the

transformer_enginelibrary. Requires minimal code changes for most transformer architectures. - DeepSpeed (v0.12+): Supports FP8 mixed-precision training with ZeRO optimizer sharding.

- Megatron-LM: NVIDIA’s own framework for large-scale training includes native FP8 support optimized for H100 clusters.

- JAX/XLA: FP8 support on H100 is available but less mature compared to PyTorch-based solutions.

If your team uses custom CUDA kernels, proprietary frameworks, or older PyTorch versions (pre-2.1), you may not fully benefit from the H100’s FP8 capabilities. In these cases, the H100 still provides advantages through higher memory bandwidth and raw FLOP throughput, but the performance gap with the A100 narrows from 2.4x to approximately 1.3-1.5x — potentially insufficient to justify the price premium.

Energy efficiency is another practical consideration that favors the H100 for sustained workloads. Despite its higher TDP (700W vs. 400W), the H100 completes the same training job in roughly half the time, resulting in comparable or lower total energy consumption per training run. For organizations with sustainability mandates or operating in regions with expensive electricity, the H100’s superior performance-per-watt metric for transformer workloads can be a meaningful advantage. Data centers powered by renewable energy, such as those operated by Genesis Cloud in Scandinavia, further mitigate the environmental impact of GPU compute. Visit our Insights section for additional research on sustainable AI infrastructure.

For detailed GPU specifications and provider availability, explore our GPU Types Directory or use the comparison tool to evaluate H100 and A100 options side by side.

Frequently Asked Questions

Is the H100 worth the price premium over the A100?

For large-scale pre-training (30B+ parameters) and high-throughput inference, yes. The H100’s FP8 support and 67% higher memory bandwidth deliver 1.7-2.4x faster training, which typically results in lower total training cost despite the 50-70% higher hourly rate. For fine-tuning and inference on smaller models, the A100 generally offers better cost-efficiency. The break-even point depends on your specific model architecture, batch size, and sequence length.

Can I train Llama 3 70B on A100 GPUs?

Yes. Llama 3 70B can be trained on A100 80GB GPUs using tensor parallelism across 8 GPUs and gradient checkpointing across multiple nodes. Training will be roughly 2x slower than on H100s, but this can be a cost-effective approach for teams with budget constraints and flexible timelines. You will need a minimum of 16 A100 GPUs (2 nodes) for reasonable training speed, with 32-64 GPUs recommended for production-quality training runs.

Should I wait for the H200 or rent H100s now?

If your project timeline allows, monitoring H200 availability is worthwhile. However, H200 supply remains very limited in mid-2026, with most units allocated to hyperscalers and major contract customers. For immediate projects, the H100 remains the best available option for large-scale training, and its pricing is at a historical low due to increased supply. We do not recommend delaying projects to wait for hardware that may have limited availability for months.

What about AMD MI300X as an alternative to both H100 and A100?

The AMD Instinct MI300X offers 192GB of HBM3 memory — more than double the H100’s 80GB — making it exceptionally powerful for inference of very large models that benefit from fitting entirely in GPU memory. Cloud availability remains limited compared to NVIDIA, but providers like CoreWeave and Vultr are expanding their MI300X inventory. The MI300X is a serious contender for inference workloads, though NVIDIA’s CUDA software ecosystem advantage persists for most training applications. ROCm compatibility continues to improve but requires careful testing with your specific framework and model architecture.

Get personalised, no-commitment quotes from top AI infrastructure providers in under 2 minutes.