Picking the wrong GPU cloud provider is an expensive mistake. We’ve seen startups waste months of engineering time and tens of thousands of dollars moving their training infrastructure after choosing a provider that looked great on a pricing page but couldn’t deliver at scale. This guide gives you the framework to make a confident decision the first time.

The 8 Questions You Must Answer First

Before you evaluate a single provider, answer these questions about your workload:

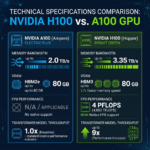

- What GPU do you need? H100, A100, RTX 4090, or something else?

- How many GPUs at a time? Single GPU vs small cluster (2-8) vs large cluster (16+)?

- For how long and how often? Continuous 24/7, scheduled daily, or on-demand burst?

- What’s your data residency requirement? US-only, EU-only (GDPR), or flexible?

- Do you need managed services or bare compute? Notebooks, MLOps platform, or raw SSH access?

- What’s your monthly GPU budget? Under $2K, $2-20K, or $20K+?

- What are your compliance requirements? SOC 2, HIPAA, ISO 27001, GDPR?

- How quickly do you need to scale? Hours (on-demand) or days (reserved)?

The Provider Selection Framework

With your answers in hand, here’s how to narrow down providers:

For Solo Developers and Small Teams (Under $2K/month)

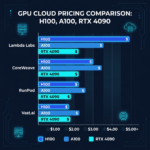

RunPod and Vast.ai are your best options. RunPod’s Community Cloud offers the best balance of cost and reliability for development and experimentation. Vast.ai gives you the absolute lowest prices but requires more tolerance for hardware variability. Both offer pay-per-second billing with no commitments.

For Growing AI Startups ($2K–$20K/month)

Lambda Labs is the sweet spot: competitive H100 and A100 pricing, reliable infrastructure, clean developer experience, and no enterprise contract requirements. For teams needing on-demand cluster scaling, RunPod Secure Cloud or Paperspace are solid alternatives with managed features.

For Enterprise AI Teams ($20K+/month)

CoreWeave, Voltage Park, and FluidStack compete for enterprise business. CoreWeave wins on Kubernetes-native infrastructure and ecosystem integrations. Voltage Park wins on raw H100 cluster scale. FluidStack offers a strong EU presence with competitive pricing.

Evaluating Provider Reliability

Price is easy to compare. Reliability is harder. Here’s what to actually check:

- Uptime history: Ask for historical uptime data or check their status page. Look for 99%+ SLAs in writing.

- Spot vs on-demand: Will your instance be preempted? What’s the notice period? Is there automatic checkpoint saving?

- Support responsiveness: Send a pre-sales question and time the response. Good providers answer within 2 hours during business hours.

- Community feedback: Check Twitter/X, Reddit (/r/LocalLLaMA, /r/MachineLearning), and Discord servers for honest user experiences.

Network Performance Matters More Than You Think

For multi-GPU training, the network between GPUs (NVLink within a node, InfiniBand between nodes) has a massive impact on training speed. A provider with fast GPUs but slow inter-node networking can deliver 30-50% worse training throughput than expected. Before committing to a large cluster, run a simple all-reduce benchmark (NCCL tests) to measure actual inter-GPU bandwidth.

Storage: The Often-Overlooked Factor

Training pipelines move enormous amounts of data. Evaluate:

- Local NVMe speed: Critical for datasets that don’t fit in RAM. Look for 5-7GB/s sequential read.

- Shared filesystem latency: NFS or parallel filesystems for checkpoint storage. High latency kills checkpoint frequency.

- Object storage integration: Can you sync to S3-compatible storage easily? What are the egress costs?

Red Flags to Watch For

- No published uptime SLA or historical status data

- Opaque pricing requiring a sales call for basic configurations

- No trial credits, no pay-as-you-go option

- Poor documentation — if the docs are bad, support will be worse

- No user community or public references from real customers

The Shortcut: Use a Marketplace

If evaluating 10+ providers sounds exhausting, there’s a better way. ComputeStacker lists and rates all major GPU cloud providers with verified pricing, hardware specs, and user reviews in one place. You can compare any two providers side-by-side, or submit your requirements and receive competitive quotes from multiple providers simultaneously — in under 24 hours.

You can also filter providers by geographic region to ensure data residency compliance, or by GPU type to find the exact hardware you need.

Frequently Asked Questions

What is the best GPU cloud provider for beginners?

RunPod is the best starting point for most beginners in 2026. It offers a clean interface, extensive pre-built templates for popular AI models, affordable pricing starting from $0.34/hr for RTX 4090, and a large community with tutorials and support. Paperspace’s Gradient is also excellent if you prefer a managed notebook environment.

How do I compare GPU cloud providers by price?

Use ComputeStacker’s comparison tool to compare GPU cloud providers by price, hardware specs, and reliability ratings. Key pricing metrics to compare are the hourly on-demand rate per GPU, storage costs, and any egress fees. Always calculate total cost including storage and data transfer, not just compute.

Get personalised, no-commitment quotes from top AI infrastructure providers in under 2 minutes.