Choosing between the NVIDIA H100 and A100 is the most common hardware decision AI teams face when planning a training or inference project in 2026. The H100 is newer and faster — but it’s also more expensive, and for many workloads, the A100 still delivers better value. The right answer depends entirely on your specific use case, model size, and budget.

This guide breaks down both GPUs across every dimension that matters for real AI workloads: raw throughput, memory, interconnects, pricing, and availability.

The Quick Answer

- Training models larger than 30B parameters: H100 — the memory bandwidth and FP8 support make a real difference at scale.

- Training 7B-30B parameter models: A100 80GB — better price-performance, excellent availability.

- Fine-tuning and inference: Either works; A100 is cheaper for sustained inference serving.

- Image generation and computer vision: RTX 4090 often beats both on price per token/image.

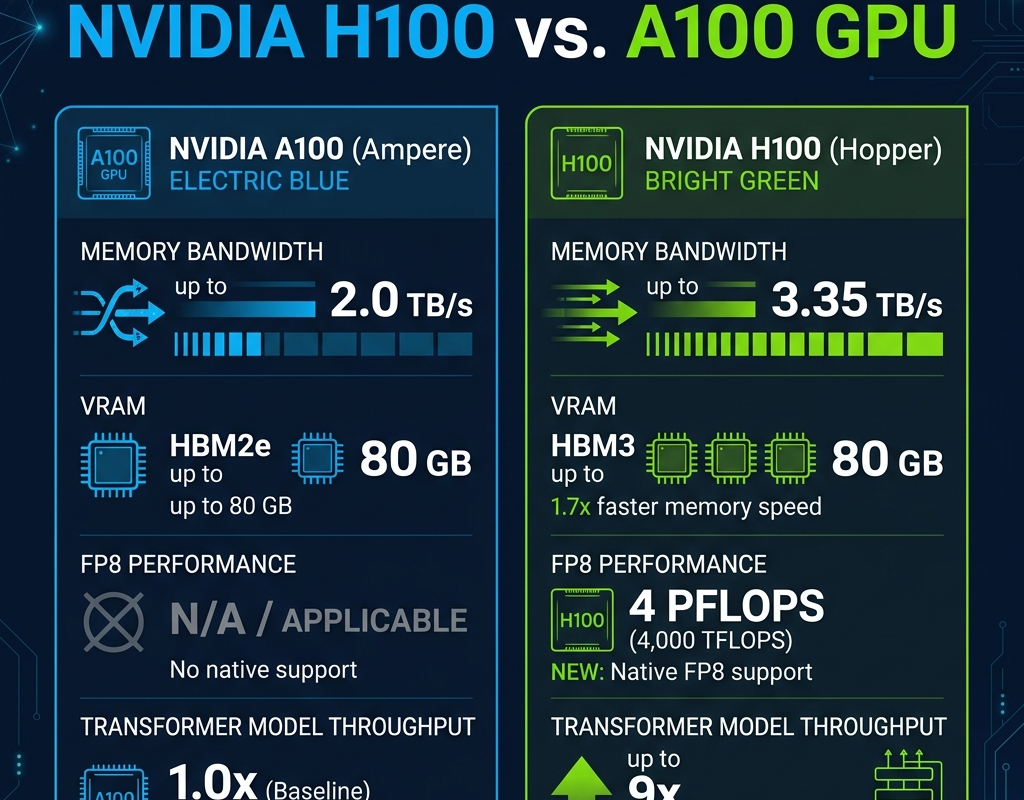

Technical Specifications Compared

| Specification | H100 SXM5 | A100 SXM4 |

|---|---|---|

| Architecture | Hopper (2022) | Ampere (2020) |

| VRAM | 80GB HBM3 | 80GB HBM2e |

| Memory Bandwidth | 3.35 TB/s | 2.0 TB/s |

| FP16 Tensor Throughput | 989 TFLOPS | 312 TFLOPS |

| FP8 Support | Yes (4 PFLOPS) | No |

| NVLink Bandwidth | 900 GB/s | 600 GB/s |

| TDP | 700W | 400W |

| Cloud Price (typical) | $3.11–3.75/hr | $2.10–2.21/hr |

What FP8 Actually Means for Training Speed

The H100’s FP8 support is its biggest differentiator from the A100. FP8 training allows models to train at roughly 2x the throughput of FP16 (BFloat16) with minimal accuracy degradation when proper loss scaling is applied. For transformer models, which spend most of their compute in matrix multiplications, this translates to genuine 1.5-2x wall-clock speedups on the H100 vs A100 for the same batch size.

In practice, teams training Llama or Mistral architecture models report 40-70% faster token throughput on H100 clusters vs equivalent A100 clusters. For a multi-week pre-training run, that savings in wall-clock time often justifies the premium.

Memory Bandwidth: The Real Bottleneck

For transformer inference — particularly with long context windows — memory bandwidth is often the primary bottleneck, not raw FLOPS. The H100’s 3.35 TB/s vs the A100’s 2.0 TB/s creates a 67% advantage in memory-bound workloads. This directly translates to higher tokens/second throughput during inference, especially for large-context requests (32K+ tokens).

For teams running production inference with high-context workloads (RAG applications, long-document summarization, code generation), the H100 often pays for itself in lower inference cost per token despite the higher hourly rate.

Multi-GPU Scaling: InfiniBand and NVLink

When you scale beyond a single GPU, the interconnect becomes critical. Both H100 and A100 SXM variants use NVLink — but the H100 uses NVLink 4.0 at 900GB/s vs the A100’s NVLink 3.0 at 600GB/s. In a standard 8-GPU server, this 50% bandwidth improvement significantly reduces all-reduce communication overhead during distributed data-parallel training.

For multi-node clusters (more than 8 GPUs), InfiniBand networking dominates. Both GPUs connect via the same InfiniBand fabric on major providers, so provider infrastructure quality matters more than GPU generation at this scale.

Pricing on Major GPU Cloud Providers (2026)

| Provider | H100 SXM5 ($/hr) | A100 SXM4 80GB ($/hr) |

|---|---|---|

| Lambda Labs | $3.11 | $2.20 |

| CoreWeave | $3.75 | $2.21 |

| RunPod | $4.49 | $2.49 |

| Cudo Compute | $3.25 | $2.10 |

| FluidStack | $2.19 | $1.89 |

When to Choose the A100

- Training 7B-30B parameter models where H100 FP8 gains don’t justify the 40-70% price premium

- Inference serving for models that fit in 40-80GB VRAM with moderate context lengths

- Budget-constrained research where maximizing GPU-hours matters more than raw throughput

- Teams using older PyTorch/JAX versions without H100-optimized kernels

When to Choose the H100

- Pre-training models above 30B parameters — the memory bandwidth advantage compounds at scale

- Production inference with long context windows (32K+ tokens) or high-QPS requirements

- Teams using FP8-optimized training frameworks (Transformer Engine, DeepSpeed FP8)

- Time-constrained training runs where faster wall-clock time matters more than hourly cost

Want to run your workload on both and compare actual results? Browse providers offering H100 and A100 instances on ComputeStacker. Use our GPU types guide to explore full specs, or request quotes from providers for both configurations.

Frequently Asked Questions

Is the H100 worth the premium over the A100?

For large model pre-training (30B+ parameters) and high-throughput inference, yes — the H100’s FP8 support and memory bandwidth advantage often deliver 40-70% faster training, which justifies the 40-70% price premium. For smaller models and fine-tuning workloads, the A100 typically offers better cost-efficiency.

Can I use A100 for LLaMA 3 70B training?

Yes. LLaMA 3 70B can be trained on A100 80GB GPUs using tensor parallelism and gradient checkpointing across multiple nodes. It will be significantly slower than H100 training but is a cost-effective approach for teams with budget constraints.

Get personalised, no-commitment quotes from top AI infrastructure providers in under 2 minutes.