When people ask “how much does it cost to train an AI model?” the honest answer is: it depends on four things — the size of the model, the GPU you’re using, the provider you choose, and how efficiently your training code is written. Get all four right and you can train a capable 7B parameter model for under $5,000. Get them wrong and you’ll spend ten times that for the same result.

This breakdown covers real 2026 pricing for GPU compute, storage, and data transfer — so you can build an accurate budget before you commit a single dollar.

The Biggest Variable: Model Size

Model parameter count is the first lever. Here’s a rough guide to GPU-hour requirements for training transformer models from scratch on 1 trillion tokens:

| Model Size | GPU-Hours (H100) | Est. Cost (Lambda Labs) |

|---|---|---|

| 1B parameters | ~500 GPU-hrs | ~$1,555 |

| 7B parameters | ~3,500 GPU-hrs | ~$10,885 |

| 13B parameters | ~6,500 GPU-hrs | ~$20,215 |

| 70B parameters | ~35,000 GPU-hrs | ~$108,850 |

| 405B parameters | ~200,000 GPU-hrs | ~$622,000 |

Note: These are estimates assuming efficient distributed training. Actual costs vary based on MFU (model FLOP utilization), dataset size, batch size optimization, and gradient checkpointing strategy.

Fine-Tuning vs Pre-Training: A Very Different Budget

Most teams don’t train from scratch. Supervised fine-tuning (SFT) and parameter-efficient fine-tuning methods like LoRA and QLoRA dramatically reduce the compute required:

- LoRA fine-tuning a 7B model: 4-16 GPU-hours (~$12-50 on an A100)

- Full fine-tuning a 7B model: 50-200 GPU-hours (~$110-440)

- RLHF on a 13B model: 200-800 GPU-hours (~$440-1,760)

If your goal is building a domain-specific assistant or classifier, fine-tuning a Llama 3, Mistral, or Qwen base model is 10-100x cheaper than pre-training. Start here unless you have a compelling reason to train from scratch.

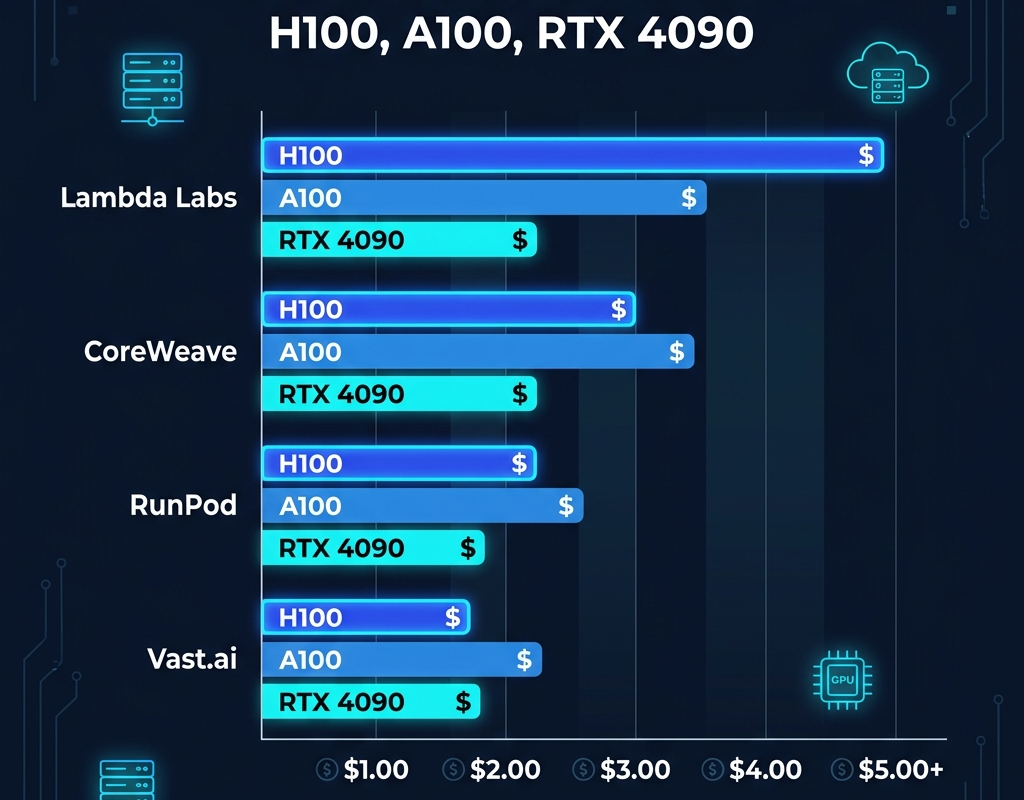

Which GPU Gives the Best Cost-Performance?

This is where provider choice and hardware selection interact:

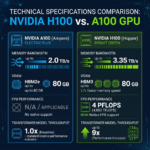

H100 SXM5 — Best for Large Models

At $3.11/hr on Lambda Labs, the H100 SXM5 delivers 4 PFLOPS of FP8 throughput, 3.35 TB/s memory bandwidth, and 80GB HBM3 VRAM. For models that don’t fit on a single A100 (>40B parameters), the H100 is essentially obligatory.

A100 SXM4 80GB — Best Price-Performance Sweet Spot

At $2.10-2.20/hr on most providers, the A100 80GB delivers excellent performance for 7B-70B parameter models. It’s roughly 2x cheaper than an H100 and still very capable.

RTX 4090 — Best for Fine-Tuning and Inference

At $0.34-0.74/hr, the RTX 4090 is 4-9x cheaper than an H100. For LoRA fine-tuning, image generation, or inference serving, it delivers outstanding value. The 24GB VRAM limits it to smaller models, but quantization (GGUF, GPTQ, AWQ) enables serving 70B models in 4-bit on a single 4090.

Storage Costs: Often Underestimated

Training data, model checkpoints, and outputs add up quickly:

- Training dataset (1T tokens): ~500GB-2TB depending on format. At $0.02-0.06/GB/month on most GPU clouds, that’s $10-120/month.

- Model checkpoints: A 70B parameter model in BF16 = ~140GB per checkpoint. Saving every 1,000 steps across a 3-week run = terabytes of storage.

- Checkpoint strategy tip: Save every N steps but only retain the last 3 checkpoints unless you specifically need older ones.

Real Budget Example: Training a Custom 7B Model

Let’s build a full budget for a startup training a custom 7B model on proprietary data:

| Cost Category | Estimate |

|---|---|

| Pre-training compute (H100, Lambda Labs) | $10,885 |

| SFT fine-tuning (A100, RunPod) | $440 |

| RLHF / DPO alignment (A100, RunPod) | $880 |

| Storage (3 months, 2TB) | $120 |

| Evaluation runs and experiments | $500 |

| Data egress / transfers | $100 |

| Total | ~$12,925 |

This is a realistic budget for a capable, domain-specialized 7B language model — not a back-of-napkin estimate. The key is choosing the right provider for each stage: high-availability H100s for pre-training, cheaper A100s for alignment tuning.

Tips to Cut Your Training Budget by 30-50%

- Maximize MFU: Poor model FLOP utilization is the single biggest hidden cost. Use FlashAttention-2, gradient checkpointing, and proper batch sizing to keep MFU above 40%.

- Use spot/preemptible instances: On RunPod and Vast.ai, community cloud pricing is 40-60% cheaper. Build fault tolerance into your training loop with checkpoint recovery.

- Start small and scale: Prototype on RTX 4090s. Only move to H100 clusters when architecture is validated.

- Use reserved pricing: A 1-month H100 reservation typically cuts hourly pricing by 30-40% vs on-demand.

Ready to get quotes for your specific training budget? Submit your compute requirements on ComputeStacker and receive competitive quotes from multiple providers. You can also browse provider listings to compare pricing directly, or use our GPU types guide to choose the right hardware for your workload.

Frequently Asked Questions

How much does it cost to train a 7B parameter LLM in 2026?

Training a 7B parameter LLM from scratch on 1 trillion tokens costs approximately $10,000-15,000 in GPU compute using H100 instances at current 2026 pricing. Fine-tuning an existing 7B model is dramatically cheaper — typically $50-500 depending on dataset size and method used.

Which GPU is most cost-effective for AI training in 2026?

The A100 80GB offers the best cost-performance balance for most training workloads in 2026, running $2.10-2.20/hr on most providers. For large models over 70B parameters, the H100 is necessary for its memory bandwidth and capacity. For fine-tuning and inference, the RTX 4090 at $0.34-0.74/hr delivers outstanding value.

Get personalised, no-commitment quotes from top AI infrastructure providers in under 2 minutes.