The single most effective lever for reducing your AI compute bill is switching from on-demand to spot GPU instances. Spot instances — also called interruptible, preemptible, or auction-based instances — offer the exact same GPU hardware at discounts of 40% to 80% compared to on-demand pricing. The trade-off is simple: the provider can reclaim your GPU with minimal notice (typically 30 to 120 seconds) when demand from on-demand customers increases.

For many AI workloads, this trade-off is overwhelmingly favorable. Modern training frameworks support checkpoint-and-resume natively, which means a spot instance interruption results in a minor delay (restarting from the last checkpoint), not a catastrophic loss of progress. Teams that master spot instance strategies routinely reduce their annual compute spend by hundreds of thousands of dollars while maintaining identical training throughput.

This guide provides a comprehensive analysis of when spot instances are appropriate, how to engineer fault-tolerant training pipelines, which providers offer the best spot pricing, and when you should absolutely insist on on-demand guarantees.

- Spot pricing for H100 GPUs ranges from $1.50 to $2.50/hr — compared to $3.00 to $4.00/hr on-demand.

- Spot instances are ideal for pre-training, hyperparameter sweeps, batch inference, and fine-tuning — any workload that can be checkpointed and resumed.

- Production inference serving should never run on spot instances due to the impact of interruptions on user-facing availability.

- Building a fault-tolerant spot pipeline requires engineering investment, but the ROI is typically 3-5x within the first month.

Understanding Spot Instance Economics

To understand why spot pricing exists, you need to understand the business model of GPU cloud providers. Providers invest billions in GPU hardware that must generate revenue 24/7 to be profitable. However, demand for GPU compute is not constant — it fluctuates based on time of day, day of week, and market cycles. During off-peak periods, providers have idle GPUs generating zero revenue.

Spot pricing solves this problem. Rather than leaving GPUs idle, providers auction excess capacity at steep discounts, knowing they can reclaim it when full-price customers arrive. For the provider, earning $1.50/hr on a spot instance is infinitely better than earning $0/hr on an idle GPU. For the customer, accessing an H100 at half price is an extraordinary opportunity — provided you can handle the interruption risk.

Spot Pricing Across Major Providers (2026)

| Provider | GPU | On-Demand ($/hr) | Spot ($/hr) | Savings |

|---|---|---|---|---|

| AWS | H100 (p5) | $12.29/GPU | ~$5.50/GPU | 55% |

| GCP | H100 (a3) | $11.56/GPU | ~$4.90/GPU | 58% |

| RunPod | H100 PCIe | $3.29 | $1.99 | 40% |

| RunPod | RTX 4090 | $0.39 | $0.19 | 51% |

| Vast.ai | A100 80GB | ~$1.50 | ~$0.70 | 53% |

| Vast.ai | RTX 3090 | ~$0.20 | ~$0.09 | 55% |

On hyperscalers, spot savings are percentage-wise impressive but still result in high absolute prices. On specialized providers, spot pricing delivers both percentage discounts and low absolute costs, making them the clear winner for budget-sensitive teams. Compare all providers on ComputeStacker to see current spot availability.

When to Use Spot Instances

Spot instances are appropriate for any workload that meets two criteria: it can be interrupted without catastrophic consequences, and it can resume from a checkpoint without repeating significant work.

Ideal Spot Workloads

- Pre-training and Continued Pre-Training: Modern training frameworks (PyTorch, DeepSpeed, Megatron-LM) support automatic checkpointing at configurable intervals. With checkpoints saved every 15-30 minutes, a spot interruption wastes at most 30 minutes of compute — trivial compared to the thousands of dollars saved over a multi-week training run.

- Hyperparameter Sweeps: Each trial in a hyperparameter search is independent. If one trial is interrupted, it can be restarted or abandoned without affecting other trials. Tools like Optuna and Weights & Biases Sweeps handle trial management gracefully with spot interruptions.

- Batch Inference: Processing a large corpus of documents, images, or audio files through an AI model is inherently parallelizable and resumable. If a spot instance is reclaimed, simply resume processing from where you left off.

- Fine-Tuning: LoRA and full fine-tuning workloads typically complete in hours, not weeks. The probability of interruption during a 4-hour run is relatively low, and checkpoint-based resumption handles the rare interruption gracefully.

- Data Preprocessing: Tokenization, deduplication, embedding generation, and other preprocessing tasks are highly parallelizable and naturally resumable.

When NOT to Use Spot Instances

- Production Inference Serving: If a user sends a query to your AI chatbot and the underlying GPU is reclaimed mid-generation, the user receives an error. For user-facing applications requiring guaranteed availability, on-demand or reserved instances are mandatory.

- Real-Time Processing: Applications requiring continuous, uninterrupted GPU access — such as live video analysis, real-time recommendation systems, or autonomous vehicle simulation — cannot tolerate interruptions.

- Short Time-Sensitive Training: If you have a hard deadline and the training run must complete within a specific window, spot instance risk may be unacceptable. On-demand provides the guarantee you need.

Engineering Fault-Tolerant Spot Pipelines

The engineering investment required to use spot instances effectively is surprisingly modest. Most modern ML frameworks provide the building blocks out of the box.

Checkpointing Strategy

The foundation of spot tolerance is frequent, reliable checkpointing. A checkpoint saves the complete training state — model weights, optimizer state, learning rate scheduler state, data loader position, and random number generator seeds — to persistent storage. When a new instance launches, it loads the checkpoint and resumes training exactly where it left off.

Best practices for checkpointing in spot environments include saving checkpoints every 15-30 minutes (balancing I/O overhead against lost-work risk), using asynchronous checkpoint saving to avoid blocking training, storing checkpoints on provider-independent storage (Cloudflare R2, AWS S3, or a dedicated NFS mount) rather than local instance storage which is destroyed on termination, and maintaining at least 3 recent checkpoints to protect against corruption.

Automatic Job Resumption

When a spot instance is reclaimed, you need automation to request a new instance and resume training. On hyperscalers, AWS Batch and GCP’s Managed Instance Groups can automatically replace preempted instances. On specialized providers, scripts that monitor instance health and re-provision via API are straightforward to implement. Tools like SkyPilot automate this process across multiple clouds, automatically seeking the cheapest available spot capacity.

Graceful Shutdown Handling

Most providers send a termination signal (SIGTERM) 30-120 seconds before reclaiming a spot instance. Your training script should trap this signal and immediately trigger an emergency checkpoint save. This ensures that the maximum lost work is the time since the last automatic checkpoint, not the time since the last scheduled checkpoint. In PyTorch, this is accomplished by registering a signal handler that calls the checkpoint-saving function.

The Hybrid Approach: Combining Spot and On-Demand

The most cost-effective strategy for large-scale training is a hybrid approach that combines spot and on-demand instances within the same pipeline. A common pattern involves running the majority of GPU workers on spot instances, keeping a small number of “anchor” nodes on on-demand instances, and using the anchor nodes to coordinate checkpointing and data loading. This approach ensures that even if all spot instances are simultaneously reclaimed (a rare but possible event), the anchor nodes preserve the training state and can resume quickly when spot capacity becomes available again.

Another hybrid strategy is temporal: use on-demand instances during peak demand hours (typically weekday business hours) when spot availability is lowest and prices are highest, and switch to spot instances during off-peak hours (nights and weekends) when prices plummet. This requires automation but can reduce costs by an additional 15-25% compared to pure spot usage.

Cost Savings Calculator

To illustrate the financial impact, consider a concrete scenario: a team training a 13B parameter model on 256B tokens using 32 H100 GPUs.

On-Demand (Lambda Labs @ $3.49/hr):

Duration: ~21 days continuous

Total GPU-hours: 32 × 504 = 16,128 hours

Total cost: $56,287

Spot (RunPod @ $1.99/hr, with 15% overhead for interruptions):

Effective duration: ~24 days (including restart overhead)

Total GPU-hours: 32 × 580 = 18,560 hours

Total cost: $36,934

Savings: $19,353 (34%)

For teams running multiple training jobs per month, these savings compound to six or seven figures annually. The engineering investment to build spot-tolerant pipelines (typically 2-4 weeks of a senior ML engineer’s time) pays for itself within the first month.

Real-World Case Studies

Case Study 1: AI Research Lab Using Spot for Hyperparameter Optimization

A university research lab needed to run 500 independent hyperparameter trials for a novel attention mechanism. Each trial required 4 hours on a single A100 GPU. Using on-demand pricing on Lambda Labs ($2.09/hr), the total cost would have been $4,180. By running all trials on Vast.ai spot instances ($0.70/hr for A100 80GB during off-peak hours), the lab completed the sweep for $1,400 — a 66% reduction. Approximately 8% of trials were interrupted by spot reclamations, but since each trial was independent, the interrupted trials were simply restarted on new instances, adding roughly 3% to the total compute time.

Case Study 2: Startup Mixing Spot and On-Demand for Pre-Training

An AI startup pre-training a 13B parameter model used a hybrid strategy: 24 GPUs on RunPod spot instances ($1.99/hr) during the compute-intensive forward/backward pass phases, and 8 GPUs on Lambda Labs on-demand ($3.49/hr) as “anchor nodes” responsible for checkpointing and data loading coordination. Over the 21-day training run, this approach cost approximately $38,000 compared to an estimated $56,000 for an all-on-demand configuration — a savings of $18,000 (32%). The startup experienced 12 spot interruptions during the run, each requiring 15-20 minutes for the orchestration system to provision replacement instances and resume from the latest checkpoint. Total downtime from interruptions was approximately 4 hours, representing less than 1% of the total training time.

Ready to optimize your compute spend? Use our provider comparison tool to find the best spot pricing across all GPU types, or explore GPU specifications to match hardware to your workload requirements. For personalized recommendations, request quotes from multiple providers.

Frequently Asked Questions

How much warning do you get before a spot instance is reclaimed?

Warning times vary by provider. AWS provides a 2-minute warning via the instance metadata endpoint. GCP gives a 30-second ACPI G3 notification. RunPod typically provides 30-60 seconds. Vast.ai varies by host configuration. Your training script should be configured to save an emergency checkpoint immediately upon receiving the termination signal, regardless of the checkpoint schedule.

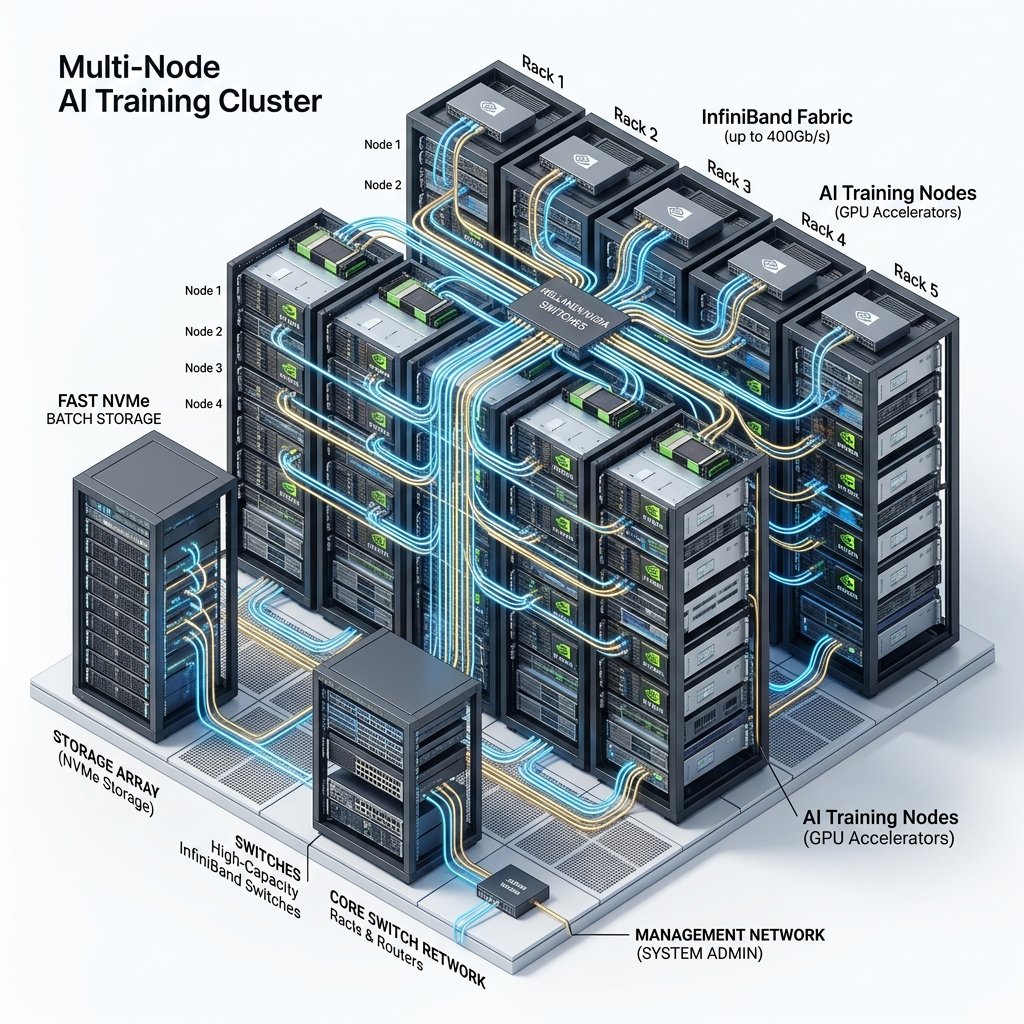

Can I use spot instances for multi-node distributed training?

Yes, but it requires careful engineering. If one node in a multi-node training job is reclaimed, the entire job must pause because distributed training requires all nodes to synchronize. Your orchestration layer must detect the lost node, save a checkpoint, wait for a replacement node to become available, and then restart all nodes from the checkpoint. Frameworks like DeepSpeed and PyTorch Elastic support elastic training that can adapt to changing node counts, though not all workloads support this gracefully.

Which GPU cloud provider offers the best spot pricing in 2026?

For absolute lowest prices, Vast.ai’s open marketplace consistently offers the cheapest spot GPU instances, with RTX 3090s available for under $0.10/hr. For a balance of price and reliability, RunPod’s spot pricing (H100 at $1.99/hr, RTX 4090 at $0.19/hr) offers competitive rates with more consistent availability and a cleaner user experience. Among hyperscalers, GCP preemptible instances typically offer slightly better spot rates than AWS.

What tools can automate spot instance management across multiple providers?

SkyPilot (from UC Berkeley) is the most popular open-source tool for multi-cloud spot management. It automatically finds the cheapest spot instances across AWS, GCP, Azure, Lambda, RunPod, and other providers, handles preemption recovery, and migrates workloads between clouds. Other tools include Determined AI (now part of HPE) for managed training orchestration with spot support, and custom solutions built on Kubernetes with the Karpenter autoscaler for cloud-native spot management. For simpler setups, a bash script monitoring instance health and re-provisioning via provider APIs is often sufficient.

Get personalised, no-commitment quotes from top AI infrastructure providers in under 2 minutes.