For European AI teams, the question of where to run GPU workloads is not merely a technical optimization problem — it is a legal imperative with severe financial consequences. The General Data Protection Regulation (GDPR) governs how personal data of EU residents is collected, processed, and stored. When an AI model is trained on datasets containing personal information — names, email addresses, behavioral data, medical records — that training constitutes data processing under GDPR. Choosing a GDPR-compliant GPU cloud is therefore not optional for any organization processing EU citizen data.

The stakes are not theoretical. European data protection authorities have levied over €4.5 billion in GDPR fines since the regulation’s enforcement began in 2018. Meta alone received a €1.2 billion fine in 2023 for transferring EU user data to US servers. For AI startups and scale-ups, a GDPR violation can be existential — fines of up to 4% of global annual revenue, combined with reputational damage that destroys customer trust.

This guide provides a thorough analysis of the legal landscape, the technical requirements for compliant AI infrastructure, and a detailed evaluation of European GPU cloud providers capable of hosting training and inference workloads within the European Economic Area (EEA).

- Training AI models on EU personal data requires compute infrastructure physically located within the EEA, with a signed Data Processing Agreement (DPA).

- Using US-headquartered clouds (AWS, GCP, Azure) in EU regions carries legal risk due to the US CLOUD Act, even with EU-US Data Privacy Framework protections.

- Sovereign European providers like Genesis Cloud (Germany) and Scaleway (France) offer complete legal isolation from US surveillance laws.

- Beyond compliance, hosting inference in the EU reduces latency by 40-100ms for European end users, improving user experience.

How AI Training Intersects with GDPR

GDPR applies whenever personal data is “processed” — and processing is defined extremely broadly. It includes collection, storage, organization, structuring, adaptation, retrieval, consultation, use, disclosure, and erasure. When a neural network is trained on data containing personal information, every forward pass and backward pass through the network constitutes processing.

This creates three distinct compliance obligations for AI teams:

1. Lawful Basis for Processing

Before training a model on personal data, you must establish a lawful basis under Article 6 of GDPR. For most AI applications, this means either obtaining explicit consent from data subjects, demonstrating a legitimate interest that does not override individual rights, or fulfilling a contractual obligation. The “legitimate interest” basis requires a formal Legitimate Interest Assessment (LIA) documented in your records.

2. Data Minimization and Purpose Limitation

GDPR requires that you process only the minimum amount of personal data necessary for your stated purpose. If you are training a customer service chatbot, you cannot include unrelated personal data (medical records, financial information) in the training dataset simply because it is available. Your data preprocessing pipeline must actively filter, anonymize, or pseudonymize personal data before it enters the training loop.

3. Cross-Border Data Transfer Restrictions

This is where infrastructure selection becomes critical. GDPR restricts the transfer of personal data to countries outside the EEA that do not provide “adequate” data protection. The European Commission maintains a list of countries with adequacy decisions. The United States is covered by the EU-US Data Privacy Framework (DPF), but this mechanism remains legally fragile following the precedent set by the Schrems II ruling.

The Schrems II Problem and the CLOUD Act

The 2020 Schrems II judgment by the Court of Justice of the European Union (CJEU) invalidated the EU-US Privacy Shield and cast doubt on the legal adequacy of Standard Contractual Clauses (SCCs) for data transfers to the United States. The court found that US surveillance laws — particularly Section 702 of the Foreign Intelligence Surveillance Act (FISA) and Executive Order 12333 — provide insufficient protection for EU personal data.

While the EU-US Data Privacy Framework (DPF) was adopted in 2023 to replace Privacy Shield, privacy advocates and legal scholars widely expect it to face a “Schrems III” challenge. The fundamental legal tension remains: the US CLOUD Act empowers US law enforcement to compel US-headquartered companies to produce data stored anywhere in the world, including on servers physically located within the EU. This means that training data processed by AWS Frankfurt, GCP Belgium, or Azure Netherlands is still theoretically accessible to US authorities via legal process directed at the US parent company.

For risk-averse organizations — particularly those in healthcare, finance, and government — the safest approach is to use GPU cloud providers that are entirely outside US legal jurisdiction. This means choosing providers headquartered in EU member states, governed by EU law, and with no US parent company.

European Sovereign GPU Cloud Providers

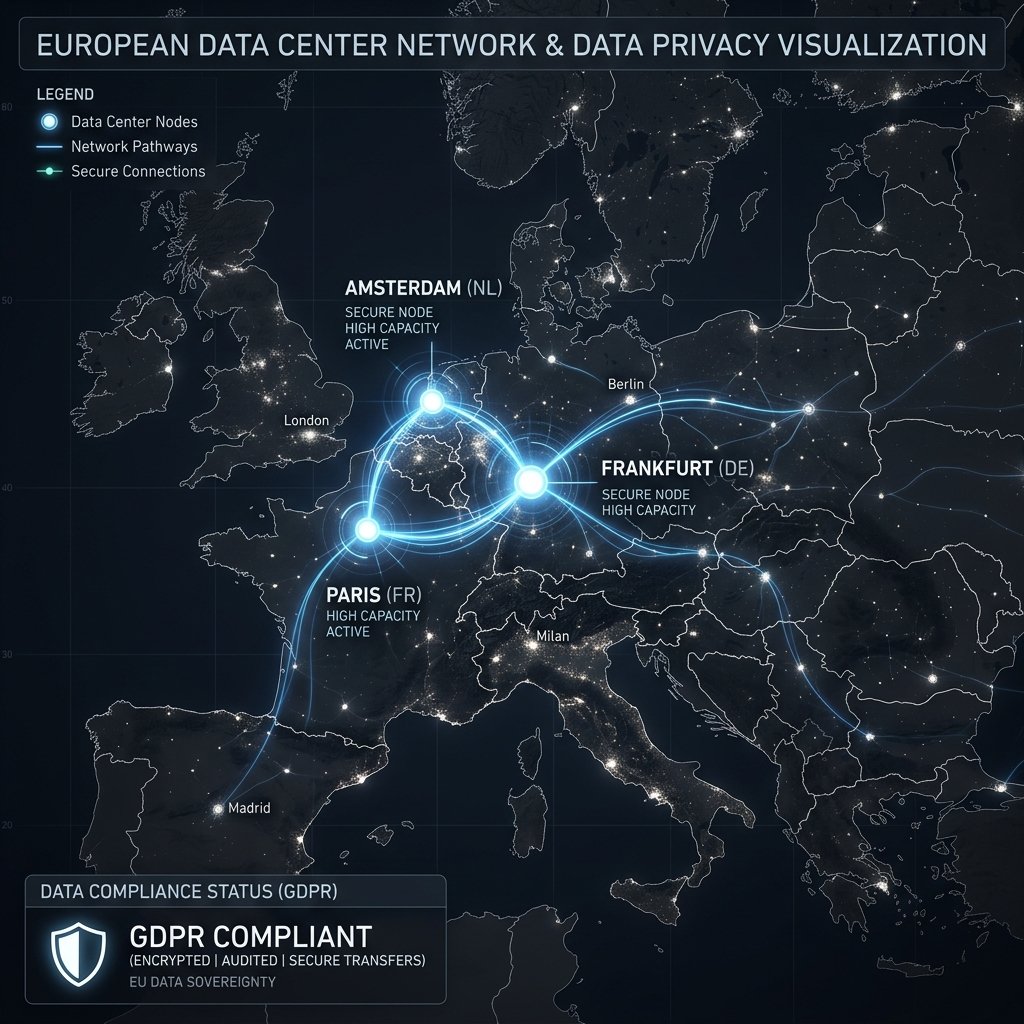

A growing number of European-headquartered GPU cloud providers offer complete legal isolation from US surveillance laws while providing competitive AI compute infrastructure.

Genesis Cloud (Germany)

Genesis Cloud operates exclusively from data centers in Germany and Norway, powered by 100% renewable energy. They offer NVIDIA H100 and L40S instances with competitive pricing and full GDPR compliance documentation, including a comprehensive Data Processing Agreement (DPA). Their infrastructure runs on European-manufactured networking equipment, further reducing the risk of foreign government access.

H100 Pricing: ~$3.89/hr on-demand

Best for: EU-based AI startups, healthcare AI, government research projects

Scaleway (France)

A subsidiary of the French telecommunications group Iliad, Scaleway operates data centers in Paris, Amsterdam, and Warsaw. They offer GPU instances (including H100) alongside a comprehensive cloud ecosystem including managed Kubernetes, object storage, and serverless functions — providing a European alternative to the full hyperscaler experience.

Hetzner Cloud (Germany)

While primarily known for budget-friendly cloud computing, Hetzner offers dedicated GPU servers from their data centers in Falkenstein and Helsinki. They are a popular choice for European AI teams with limited budgets who need guaranteed EU data residency.

OVHcloud (France)

OVHcloud is one of Europe’s largest cloud infrastructure providers, operating over 40 data centers worldwide with a strong EU presence. They offer NVIDIA A100 and L40S GPU instances with full GDPR compliance and data sovereignty guarantees. Their “SecNumCloud” certified infrastructure meets the highest French government security standards.

Using US Providers with EU Regions: Risk Assessment

AWS, Google Cloud, and Azure all operate data centers within the EU and offer region-locked deployments. Many teams choose to use these providers with data residency configurations that ensure data never leaves the EU region. This approach is legally defensible under the EU-US Data Privacy Framework, but carries residual risk.

The key question is: does the US CLOUD Act apply to data stored by AWS in Frankfurt? The legal consensus is “potentially yes.” AWS is a US-headquartered company, and a US court order could theoretically compel them to produce data from any server worldwide, regardless of physical location. AWS and other hyperscalers have publicly stated they would challenge such orders, but there are no guarantees.

For organizations with moderate risk tolerance — such as AI companies training on publicly available data or anonymized datasets — using a US provider with EU regions is generally considered acceptable. For organizations processing highly sensitive data (patient records, classified government data, children’s data), the sovereign European provider route eliminates this legal uncertainty entirely.

Technical Requirements for Compliant AI Infrastructure

Beyond provider selection, achieving GDPR compliance for AI workloads requires several technical measures:

- Data Processing Agreements (DPAs): Your GPU cloud provider is a “data processor” under GDPR. You must have a signed DPA specifying the nature and purpose of processing, data categories, retention periods, and the processor’s obligations regarding security, breach notification, and data deletion.

- Encryption at Rest and in Transit: All personal data must be encrypted both when stored on disk and when transmitted over the network. AES-256 for storage and TLS 1.3 for network transmission are the accepted standards.

- Access Controls: Implement strict role-based access to training data and model weights. Logging and auditing of all data access events is required to demonstrate accountability.

- Data Deletion Capabilities: Under Article 17 (Right to Erasure), data subjects can request deletion of their personal data. Your infrastructure must support removing specific data from training datasets and, where technically feasible, influencing the model to “forget” that data.

- Privacy Impact Assessment: For AI systems that process personal data at scale, a Data Protection Impact Assessment (DPIA) under Article 35 is mandatory before training begins.

Latency Benefits of European Hosting

Compliance is not the only reason to host GPU infrastructure in Europe. Physics also matters. If your AI application serves European users — a chatbot for a German insurance company, an image generation tool for a French design agency, a document analysis system for a UK law firm — hosting inference nodes in Europe dramatically reduces latency.

A request from Berlin to an inference server in Virginia travels approximately 6,400 km through undersea cables, adding 80-120ms of round-trip latency. The same request to a server in Frankfurt travels 400 km, with round-trip latency under 10ms. For real-time conversational AI, this 100ms difference is the gap between a snappy, responsive experience and a noticeably sluggish one.

The EU AI Act: Additional Compliance Layer

Beyond GDPR, the EU AI Act — which entered into full force in August 2025 — introduces additional compliance obligations specifically for AI systems. The Act classifies AI applications into risk categories (unacceptable, high-risk, limited, and minimal risk) and imposes varying requirements based on classification.

For teams deploying high-risk AI systems (such as AI used in healthcare diagnostics, credit scoring, or employment screening), the Act mandates detailed technical documentation, human oversight mechanisms, and logging of AI system activities. These logging and auditability requirements have direct implications for your GPU cloud infrastructure. Your provider must support comprehensive logging of training runs, model versioning, and data lineage tracking. Providers like Genesis Cloud and Scaleway are actively building EU AI Act compliance tooling into their platforms, giving them a regulatory advantage over US-based competitors.

The Act also introduces obligations for foundation model providers (called “general-purpose AI” or GPAI). If you are training a foundation model that will be made available to third parties, you must comply with transparency requirements, provide technical documentation about training data and methodology, and implement safeguards against generating illegal content. The computational infrastructure you use must support these auditability and documentation requirements.

Browse our Region Directory to find GPU cloud providers with data center locations across Europe. Use our Provider Directory to filter by GDPR compliance and EU data residency capabilities.

Frequently Asked Questions

Does training an AI model on personal data count as data processing under GDPR?

Yes. The European Data Protection Board (EDPB) has confirmed that training machine learning models on personal data constitutes data processing. This includes the initial training pass, any subsequent fine-tuning, and even inference if the model processes personal data in its inputs. Organizations must ensure they have a lawful basis for processing and comply with all GDPR obligations, including data minimization, purpose limitation, and individuals’ rights.

Can I use AWS EU regions and still be GDPR compliant?

Using AWS EU regions (Frankfurt, Ireland, Stockholm) with proper data residency controls is generally considered GDPR-compliant under the current EU-US Data Privacy Framework. However, there is residual legal risk because the US CLOUD Act may enable US authorities to compel data access regardless of server location. For highly sensitive data categories (health, financial, children’s data), European sovereign providers eliminate this risk entirely.

What is the cheapest GDPR-compliant GPU cloud for AI training?

Among fully sovereign EU providers, Hetzner offers the most budget-friendly GPU options for basic workloads. For H100-class hardware with full GDPR compliance, Genesis Cloud at approximately $3.89/hr and Scaleway offer competitive pricing. For teams comfortable with US providers using EU regions, RunPod and FluidStack EU instances offer lower rates but with the CLOUD Act caveat. The total cost difference between GDPR-compliant sovereign hosting and standard cloud hosting is typically 10-20% — a modest premium for eliminating substantial regulatory risk.

Do I need a Data Processing Agreement with my GPU cloud provider?

Yes. Under Article 28 of GDPR, when a GPU cloud provider processes personal data on your behalf (as a data processor), you must have a signed Data Processing Agreement (DPA) in place. The DPA must specify the nature and purpose of processing, data categories, retention periods, the processor’s security obligations, and their duties regarding data subject rights and breach notification. Most major providers offer standardized DPAs — verify that your provider’s DPA meets your specific compliance requirements before beginning any data processing.

Get personalised, no-commitment quotes from top AI infrastructure providers in under 2 minutes.