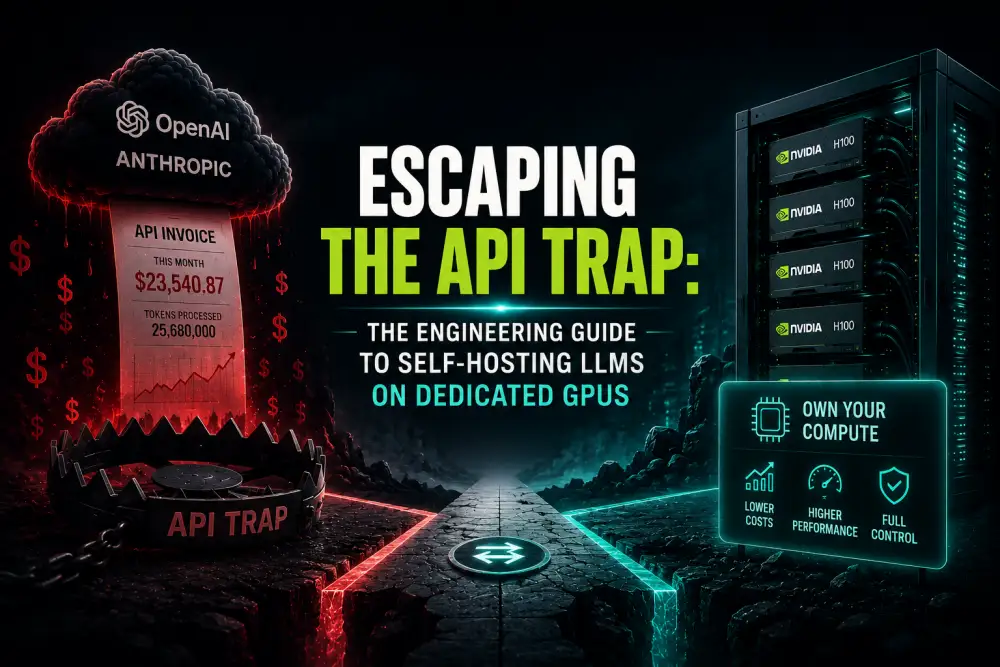

Your API bill just hit five figures for the first time. The CFO is panicking. The CEO is asking if we can “just use a cheaper model.”

As an engineering leader, you know the inevitable reality: it’s time to bring your inference in-house. It is time to transition from managed APIs to self-hosted LLMs.

But making the switch isn’t as simple as clicking “deploy” on HuggingFace.

When you rely on OpenAI, you are outsourcing a massive amount of incredibly complex infrastructure engineering. When you take that in-house, you are suddenly responsible for latency, uptime, batching, and hardware utilization.

The AI Compute Threshold Report

We analyzed pricing from 150+ GPU cloud providers to find the exact threshold where an AI startup's OpenAI API bill eclipses the cost of a dedicated H100 cluster.

Read the Full ReportHere is what it actually takes to make the leap from API dependency to owning your compute.

The Hardware Reality Check

The first shock for most teams moving to dedicated GPUs is the sheer scale of the hardware required for modern models.

You can’t run a 70B parameter model (like Llama 3 70B) on a single consumer GPU. You need serious VRAM. This usually means leasing high-end enterprise hardware like NVIDIA A100s or H100s.

And finding them isn’t easy.

The major hyperscalers (AWS, GCP, Azure) are often supply-constrained or mandate brutal 3-year commitments for H100 allocations. To find flexible, on-demand compute, engineering teams have to look at specialized GPU clouds.

This is exactly why we built the AI Compute Threshold Report. We analyzed over 150 providers to understand where the market actually is—and exactly when it makes financial sense for an engineering team to take on the overhead of managing this hardware.

The Software Stack: Beyond the Docker Container

Once you have the metal, you need the software. And the ecosystem is moving violently fast.

You aren’t just spinning up a simple Flask API. To achieve anywhere near the throughput of commercial APIs, you need to implement specialized inference engines.

- vLLM or TensorRT-LLM: You need an inference server that supports Continuous Batching and PagedAttention to maximize GPU utilization.

- Load Balancing: When (not if) a GPU node goes down, your load balancer needs to instantly route traffic to healthy nodes without dropping requests.

- Observability: You need deep metrics. Token generation speed, time-to-first-token (TTFT), and GPU temperature monitoring are no longer optional.

The Hybrid Routing Approach

The smartest engineering teams don’t make a hard cut from 100% API to 100% self-hosted overnight. They use Hybrid Routing.

They keep the heavy, highly complex reasoning tasks routed to GPT-4o or Claude 3.5.

But they route the massive, high-volume, repetitive tasks (like data extraction, summarization, or classification) to a self-hosted Llama 3 70B cluster running on leased H100s.

This drastically reduces costs while maintaining high quality where it matters. But determining the exact point to implement this requires precise math.

If you are trying to convince your leadership team to invest in dedicated GPUs, you need data. You need to prove that the MLOps infrastructure for GPUs is worth the investment.

Read the AI Compute Threshold Report. We’ve mapped the exact token-volume breakpoint where the math demands a switch to dedicated hardware. Run your numbers through our diagnostic, and stop guessing about your infrastructure.

Get personalised, no-commitment quotes from top AI infrastructure providers in under 2 minutes.